You can read this post on Medium.

Allegedly, quantum computers can do things that classical computers can’t. A phenomenon we describe as quantum supremacy. Yet, even tech giants despair in the pursuit of it. Why do Google, IBM, Microsoft, and others act like donkeys with a carrot dangled before their nose?

It is all his fault! OK, not all of it. But a lot. In 1994, Peter Shor formulated his famous quantum algorithm that factors integers exponentially faster than any known classical algorithm. It caused a lot of excitement beyond academia because modern encryption builds upon the fact that it takes thousands of years to find the prime factors of an arbitrarily large number. If we could factor such numbers in a few minutes, prime-factor-based encryption collapsed like a house of cards.

Apparently, we haven’t experienced this apocalypse yet. Shor’s algorithm is unsuited to prove quantum supremacy in practice today. Be assured nobody can read your encrypted messages. Except for the NSA, maybe. The simple reason is that Shor’s algorithm needs millions of error-corrected qubits to factor a number we’re talking about. State-of-the-art quantum computers have about 100 qubits. And, these are error-prone, too.

So, our secrets remain secure for a while. Yet, the competition for proving quantum supremacy in practice has heated up. In 2019, Google reported that their 53-qubit quantum device “Sycamore” solved a task in a few minutes that would take today’s most powerful supercomputers thousands of years.

IBM, a major competitor in the development of quantum computers, objected immediately. They argued their classical supercomputers could do the same task in 2.5 days. If you ask me, solving a task in minutes that a supercomputer takes days is still very impressive. Yet, it is not what we mean by quantum supremacy.

Where do such extraordinary expectations come from?

Quantum supremacy does not mean a slight advantage. On the contrary, it implies that the speed of a quantum computer vastly exceeds that of classical computers. But, why do we expect that? In other words, why should a quantum computer be faster than a classical computer?

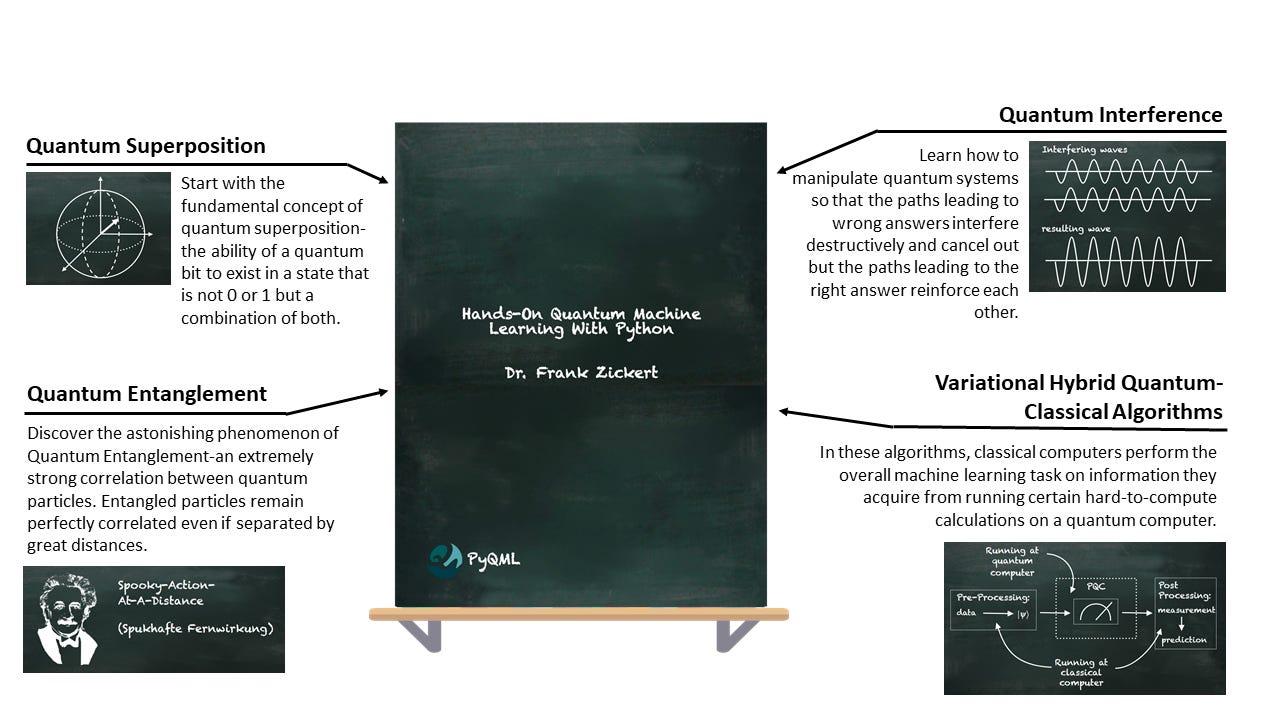

The predominant explanation of a quantum computer’s advantage over a classical computer is that we can prepare quantum bits in a superposition of an exponential number of states. Then, the quantum algorithm computes all possible inputs at the same time.

Sounds great. Yet, it appears pretty anecdotal. And, unfortunately, the description often ends at this point. If quantum computers can compute an exponential number of states concurrently, why don’t we see them everywhere? I mean, with 53 qubits, we could compute 2^53=9,007,199,254,740,992 states — at once.

Ok, we can see where the expectations come from. But then, where’s the problem?

Why do they all struggle with exploiting the advantages of a quantum computer?

Another popular explanation pins down the advantage of quantum computers based on the machinery of the computational complexity theory. Complexity theory is the study of the computational effort required to run an algorithm. And, what makes quantum computing so powerful is the algorithms it makes possible. Quantum algorithms may exhibit different complexity characteristics than their classical equivalents.

For instance, the computational effort of addition is O(n). This means that the effort of adding two numbers increases linearly with the size (digits) of the number. The computational effort of multiplication is O(n²). The effort increases by the square of the number size. These algorithms are said to be solvable in polynomial time. Therefore, they belong to class P problems.

But these problems are comparably simple. In contrast, the best classical algorithm for solving the problem of factorization, finding the prime factors of an n-digit number, is O(e^{n^{1/3}}). It means that the effort increases exponentially with the number of digits.

Do you want a taste of it? Great! Try to solve the following factorization problems without a computer.

Find the prime factors of the number 21.

Find the prime factors of the number 221.

Find the prime factors of the number 2231.

Find the prime factors of the number 22523.

I am pretty sure you’ll solve the first task very easily. Maybe, you come up with the solution right away. When you cope with the second problem, try to count the number of attempts.

You may start with 11x13=143. That’s too low. Then, you calculate 17x19=323. That’s too high. So, how about 13x17? This is 221 — the solution. It took three attempts.

You’ll likely need a lot more attempts to find the factors for 2231. And, I believe you won’t be able to find those of 22523 at all.

Let’s consider a polynomial task for a change.

Multiply 3 by 7.

Multiply 13 by 17.

Multiply 23 by 97.

Multiply 101 by 223.

Again, you’ll see that each task is harder than the previous one. But you’ll be able even to solve the last one with pen and paper.

This is the difference between O(e^{n^{1/3}}) (factorization) and O(n²) (multiplication) complexity. It must not be underestimated. While your smartphone can multiply numbers with 800 digits in a few seconds, the factorization of such numbers takes about 2,000 years on a supercomputer.

The complexity illustrates vividly what we expect from a quantum computer. And the race for quantum supremacy continues.

Very recently, in 2021, a Chinese team claimed their quantum computer “Zuchongzhi” solved a problem in an hour that would have taken eight years classically. Quite astonishing.

Nevertheless, we do not experience the effects of quantum supremacy in our everyday lives. That’s because there’s a catch! The tasks “Sycamore” and “Zuchongzhi” solved were purposely designed to demonstrate the quantum computer’s superiority. These problems aren’t of much practical interest otherwise. We’re still waiting for someone to demonstrate solving a relevant problem with a quantum computer.

But why is it so hard to solve relevant problems with a quantum computer?

If you have taken the trouble to calculate the examples above, you’ll have noticed that the multiplications are the results of the factorization problems. So, interestingly, the complexity of factorization grows exponentially. But if you know the solution, you can verify that it is correct in polynomial time. Then, we say the overall problem has a non-deterministic polynomial (NP) complexity.

So, while the evaluation of a candidate is easy and straightforward, the complexity originates from selecting the correct answer out of an exponentially growing number of candidates. And, this is where the first explanation of quantum supremacy comes into play. Remember, the number of states a quantum computer can evaluate concurrently grows exponentially with the number of qubits.

In layman’s terms, we remove the “non-deterministic” from non-deterministic polynomial complexity. And, a polynomial complex task is easy to solve. But this removal is not as easy as it sounds. It is a challenging task.

There are many known NP-hard problems, such as the boolean satisfiability problem, the traveling salesman problem, or the max-cut problem. Developing an algorithm that exploits the potential quantum supremacy requires a deep understanding of the problem and where its specific complexity comes from.

For instance, Peter Shor knew that the Fourier Transform operation was a good tool to find frequencies and that it is useful to solve factorization. His true achievement then was the discovery of the Quantum Fourier Transform.

But we can’t use the same technique to solve other NP-hard problems. We need savvy domain experts who understand enough of the problem and the specificity of quantum algorithms to discover how to solve them.

Apparently, this is a complex problem.